Nota perev. : O autor do artigo, Erkan Erol, um engenheiro da SAP, compartilha seu estudo dos mecanismos de funcionamento do comando kubectl exec , que é familiar a todos que trabalham com o Kubernetes. Ele acompanha todo o algoritmo com listagens de código fonte do Kubernetes (e projetos relacionados), que permitem entender o tópico com a profundidade necessária.

Uma sexta-feira, um colega veio até mim e perguntou como executar um comando no pod usando o

client-go . Não pude responder e de repente percebi que não sabia nada sobre o mecanismo de trabalho do

kubectl exec do

kubectl exec . Sim, eu tinha certas idéias sobre o dispositivo, mas não tinha 100% de certeza sobre a correção e, portanto, decidi resolver esse problema. Depois de estudar blogs, documentação e código-fonte, aprendi muitas coisas novas e, neste artigo, quero compartilhar minhas descobertas e entendimento. Se algo estiver errado, entre em contato comigo no

Twitter .

Preparação

Para criar um cluster em um MacBook, eu

clonei ecomm-integration-ballerina / kubernetes-cluster . Em seguida, ele corrigiu os endereços IP dos nós na configuração do kubelet, pois as configurações padrão não permitiam a

kubectl exec . Você pode ler mais sobre o principal motivo disso

aqui .

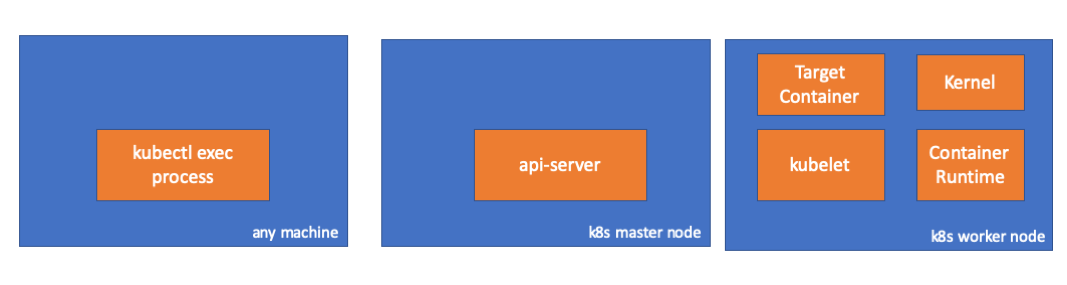

- Qualquer carro = meu MacBook

- IP mestre = 192.168.205.10

- IP do host do trabalhador = 192.168.205.11

- Porta do servidor API = 6443

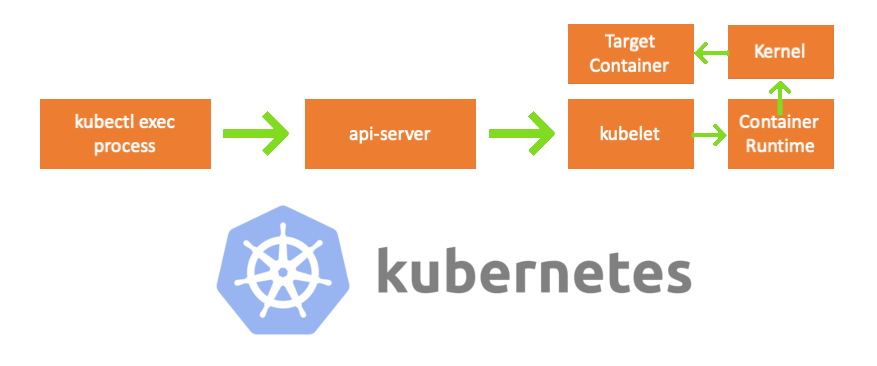

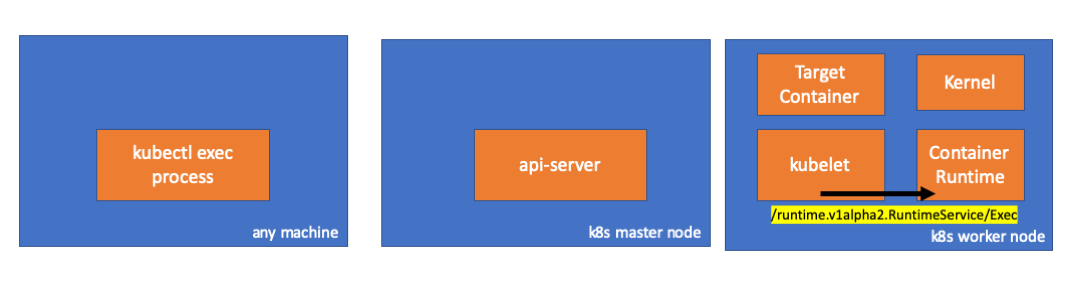

Componentes

- Processo kubectl exec : quando executamos “kubectl exec ...”, o processo inicia. Você pode fazer isso em qualquer máquina com acesso ao servidor da API do K8s. Nota trans.: Além disso, nas listagens de console, o autor usa o comentário "qualquer máquina", o que implica que comandos subseqüentes podem ser executados em qualquer máquina com acesso ao Kubernetes.

- servidor api : um componente no mestre que fornece acesso à API do Kubernetes. Este é o frontend do avião de controle em Kubernetes.

- kubelet : um agente que é executado em todos os nós do cluster. Ele fornece contêineres em pod'e.

- container runtime ( container runtime ): software responsável pela operação de containers. Exemplos: Docker, CRI-O, container ...

- kernel : kernel do SO no nó de trabalho; responsável pelo gerenciamento de processos.

- contêiner de destino : um contêiner que faz parte de um pod e opera em um dos nós de trabalho.

O que eu descobri

1. Atividade do lado do cliente

Crie um pod no espaço para nome

default :

// any machine $ kubectl run exec-test-nginx --image=nginx

Em seguida, executamos o comando exec e aguardamos 5000 segundos para mais observações:

// any machine $ kubectl exec -it exec-test-nginx-6558988d5-fgxgg -- sh

O processo kubectl aparece (com pid = 8507 no nosso caso):

// any machine $ ps -ef |grep kubectl 501 8507 8409 0 7:19PM ttys000 0:00.13 kubectl exec -it exec-test-nginx-6558988d5-fgxgg -- sh

Se verificarmos a atividade de rede do processo, descobrimos que ele possui conexões com o servidor api (192.168.205.10.6443):

// any machine $ netstat -atnv |grep 8507 tcp4 0 0 192.168.205.1.51673 192.168.205.10.6443 ESTABLISHED 131072 131768 8507 0 0x0102 0x00000020 tcp4 0 0 192.168.205.1.51672 192.168.205.10.6443 ESTABLISHED 131072 131768 8507 0 0x0102 0x00000028

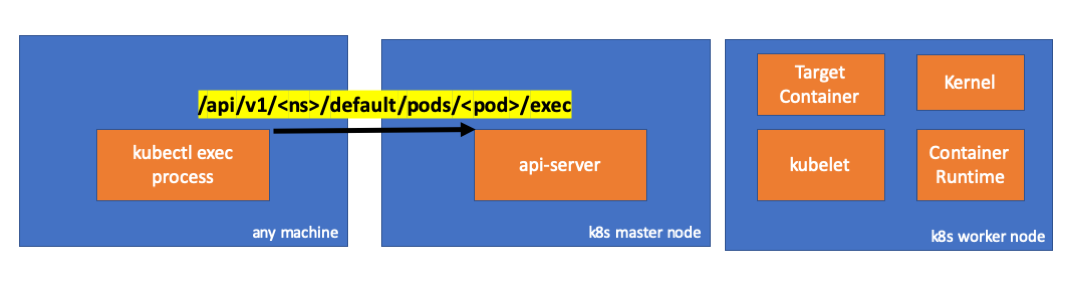

Vamos dar uma olhada no código. O Kubectl cria uma solicitação POST com o sub-recurso exec e envia uma solicitação REST:

req := restClient.Post(). Resource("pods"). Name(pod.Name). Namespace(pod.Namespace). SubResource("exec") req.VersionedParams(&corev1.PodExecOptions{ Container: containerName, Command: p.Command, Stdin: p.Stdin, Stdout: p.Out != nil, Stderr: p.ErrOut != nil, TTY: t.Raw, }, scheme.ParameterCodec) return p.Executor.Execute("POST", req.URL(), p.Config, p.In, p.Out, p.ErrOut, t.Raw, sizeQueue)

( kubectl / pkg / cmd / exec / exec.go )

2. Atividade no lado do nó principal

Também podemos observar a solicitação no lado da api-server:

handler.go:143] kube-apiserver: POST "/api/v1/namespaces/default/pods/exec-test-nginx-6558988d5-fgxgg/exec" satisfied by gorestful with webservice /api/v1 upgradeaware.go:261] Connecting to backend proxy (intercepting redirects) https://192.168.205.11:10250/exec/default/exec-test-nginx-6558988d5-fgxgg/exec-test-nginx?command=sh&input=1&output=1&tty=1 Headers: map[Connection:[Upgrade] Content-Length:[0] Upgrade:[SPDY/3.1] User-Agent:[kubectl/v1.12.10 (darwin/amd64) kubernetes/e3c1340] X-Forwarded-For:[192.168.205.1] X-Stream-Protocol-Version:[v4.channel.k8s.io v3.channel.k8s.io v2.channel.k8s.io channel.k8s.io]]

Observe que a solicitação HTTP inclui uma solicitação de alteração de protocolo. O SPDY permite multiplexar fluxos stdin / stdout / stderr / spdy-error individuais por meio de uma única conexão TCP.O servidor da API recebe a solicitação e a converte em

PodExecOptions :

( pkg / apis / core / types.go )Para executar as ações necessárias, o api-server deve saber com qual pod ele precisa entrar em contato:

( pkg / registry / core / pod / strategy.go )Obviamente, os dados do terminal são obtidos de informações do host:

nodeName := types.NodeName(pod.Spec.NodeName) if len(nodeName) == 0 {

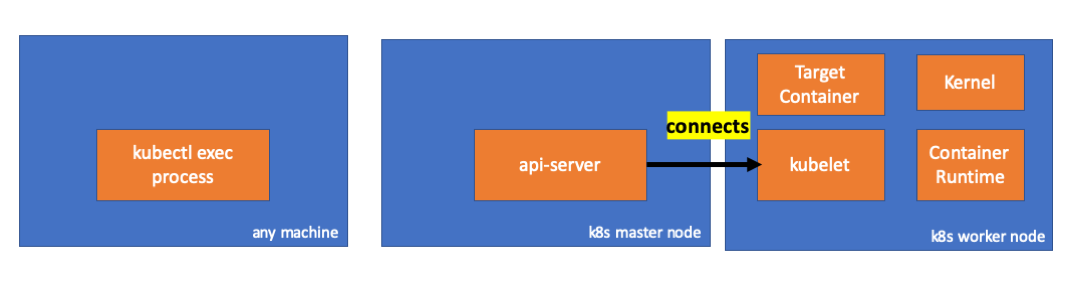

( pkg / registry / core / pod / strategy.go )Viva! O Kubelet agora tem uma porta (

node.Status.DaemonEndpoints.KubeletEndpoint.Port ) à qual o servidor da API pode se conectar:

( pkg / kubelet / client / kubelet_client.go )Na documentação de Comunicação entre nós principais> Mestre para cluster> apiserver para kubelet :

Essas conexões são fechadas no terminal HTTPS do kubelet. Por padrão, o apiserver não verifica o certificado do kubelet, o que torna a conexão vulnerável a "ataques intermediários" (MITMs) e insegura para trabalhar em redes não confiáveis e / ou públicas.

Agora, o servidor da API conhece o terminal e estabelece uma conexão:

( pkg / registry / core / pod / rest / subresources.go )Vamos ver o que acontece no nó principal.

Primeiro, descobrimos o IP do nó de trabalho. No nosso caso, isso é 192.168.205.11:

// any machine $ kubectl get nodes k8s-node-1 -o wide NAME STATUS ROLES AGE VERSION INTERNAL-IP EXTERNAL-IP OS-IMAGE KERNEL-VERSION CONTAINER-RUNTIME k8s-node-1 Ready <none> 9h v1.15.3 192.168.205.11 <none> Ubuntu 16.04.6 LTS 4.4.0-159-generic docker://17.3.3

Em seguida, instale a porta kubelet (10250 no nosso caso):

// any machine $ kubectl get nodes k8s-node-1 -o jsonpath='{.status.daemonEndpoints.kubeletEndpoint}' map[Port:10250]

Agora é hora de verificar a rede. Existe uma conexão com o nó de trabalho (192.168.205.11)? Está aí! Se você matar o processo

exec , ele desaparecerá, então eu sei que a conexão foi estabelecida pelo api-server como resultado do comando exec executado.

// master node $ netstat -atn |grep 192.168.205.11 tcp 0 0 192.168.205.10:37870 192.168.205.11:10250 ESTABLISHED …

A conexão entre o kubectl e o servidor api ainda está aberta. Além disso, há outra conexão conectando o api-server e o kubelet.

3. Atividade no nó de trabalho

Agora vamos nos conectar ao nó do trabalhador e ver o que acontece nele.

Antes de tudo, vemos que a conexão com ele também é estabelecida (segunda linha); 192.168.205.10 é o IP do nó principal:

// worker node $ netstat -atn |grep 10250 tcp6 0 0 :::10250 :::* LISTEN tcp6 0 0 192.168.205.11:10250 192.168.205.10:37870 ESTABLISHED

E a nossa equipe do

sleep ? Viva, ela também está presente!

// worker node $ ps -afx ... 31463 ? Sl 0:00 \_ docker-containerd-shim 7d974065bbb3107074ce31c51f5ef40aea8dcd535ae11a7b8f2dd180b8ed583a /var/run/docker/libcontainerd/7d974065bbb3107074ce31c51 31478 pts/0 Ss 0:00 \_ sh 31485 pts/0 S+ 0:00 \_ sleep 5000 …

Mas espere: como o kubelet aumentou isso? Há um daemon no kubelet que permite o acesso à API através da porta para solicitações de api-server:

( pkg / kubelet / server / streaming / server.go )O Kubelet calcula o ponto de extremidade de resposta para solicitações de exec:

func (s *server) GetExec(req *runtimeapi.ExecRequest) (*runtimeapi.ExecResponse, error) { if err := validateExecRequest(req); err != nil { return nil, err } token, err := s.cache.Insert(req) if err != nil { return nil, err } return &runtimeapi.ExecResponse{ Url: s.buildURL("exec", token), }, nil }

( pkg / kubelet / server / streaming / server.go )Não confunda. Retorna não o resultado do comando, mas o ponto final da comunicação:

type ExecResponse struct {

( cri-api / pkg / apis / runtime / v1alpha2 / api.pb.go )O Kubelet implementa a interface

RuntimeServiceClient , que faz parte da Interface de Tempo de Execução do Contêiner

(escrevemos mais sobre isso, por exemplo, aqui - aqui aprox. Transl.) :

Listagem longa de cri-api para kubernetes / kubernetes Ele apenas usa o gRPC para chamar um método através da Interface de Tempo de Execução do Container:

type runtimeServiceClient struct { cc *grpc.ClientConn }

( cri-api / pkg / apis / runtime / v1alpha2 / api.pb.go ) func (c *runtimeServiceClient) Exec(ctx context.Context, in *ExecRequest, opts ...grpc.CallOption) (*ExecResponse, error) { out := new(ExecResponse) err := c.cc.Invoke(ctx, "/runtime.v1alpha2.RuntimeService/Exec", in, out, opts...) if err != nil { return nil, err } return out, nil }

( cri-api / pkg / apis / runtime / v1alpha2 / api.pb.go )O Container Runtime é responsável pela implementação do

RuntimeServiceServer :

Listagem longa de cri-api para kubernetes / kubernetes

Nesse caso, devemos ver uma conexão entre o kubelet e o tempo de execução do contêiner, certo? Vamos conferir.

Execute este comando antes e depois do comando exec e observe as diferenças. No meu caso, a diferença é esta:

// worker node $ ss -a -p |grep kubelet ... u_str ESTAB 0 0 * 157937 * 157387 users:(("kubelet",pid=5714,fd=33)) ...

Hmmm ... Uma nova conexão via soquetes unix entre o kubelet (pid = 5714) e algo desconhecido. O que poderia ser? É isso mesmo, este é o Docker (pid = 1186)!

// worker node $ ss -a -p |grep 157387 ... u_str ESTAB 0 0 * 157937 * 157387 users:(("kubelet",pid=5714,fd=33)) u_str ESTAB 0 0 /var/run/docker.sock 157387 * 157937 users:(("dockerd",pid=1186,fd=14)) ...

Como você se lembra, este é um processo de daemon de encaixe (pid = 1186) que executa nosso comando:

// worker node $ ps -afx ... 1186 ? Ssl 0:55 /usr/bin/dockerd -H fd:// 17784 ? Sl 0:00 \_ docker-containerd-shim 53a0a08547b2f95986402d7f3b3e78702516244df049ba6c5aa012e81264aa3c /var/run/docker/libcontainerd/53a0a08547b2f95986402d7f3 17801 pts/2 Ss 0:00 \_ sh 17827 pts/2 S+ 0:00 \_ sleep 5000 ...

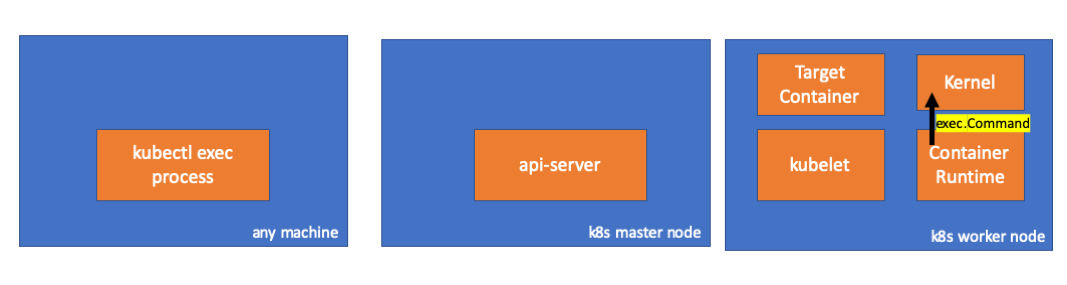

4. Atividade no tempo de execução do contêiner

Vamos examinar o código fonte do CRI-O para entender o que está acontecendo. No Docker, a lógica é semelhante.

Há um servidor responsável por implementar o

RuntimeServiceServer :

( cri-o / server / server.go )

( cri-o / erver / container_exec.go )No final da cadeia, o tempo de execução do contêiner executa um comando no nó de trabalho:

( cri-o / internal / oci / runtime_oci.go )

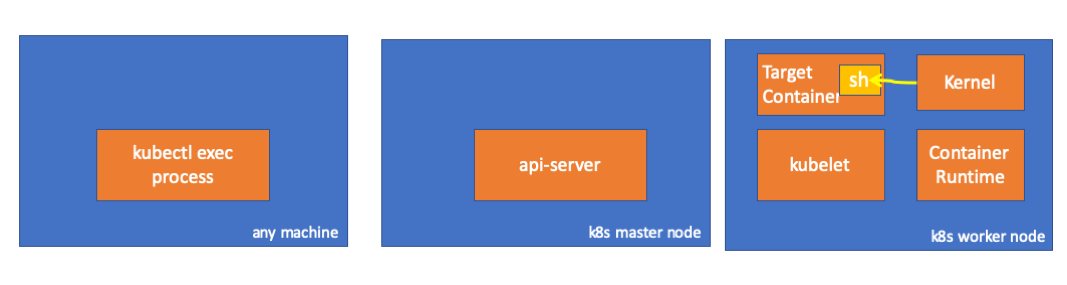

Finalmente, o kernel executa os comandos:

Lembretes

- O API Server também pode iniciar uma conexão com o kubelet.

- As seguintes conexões são mantidas até o final da sessão executiva interativa:

- entre kubectl e api-server;

- entre api-server e kubectl;

- entre o kubelet e o tempo de execução do contêiner.

- O Kubectl ou o api-server não pode executar nada nos nós de produção. O Kubelet pode iniciar, mas, para essas ações, ele também interage com o tempo de execução do contêiner.

Recursos

PS do tradutor

Leia também em nosso blog: